Abstract

This paper presents OP-Follower, a fully onboard, pure vision-based, obstacle-aware person-following framework for quadruped robots. Unlike existing approaches that rely on LiDAR, external infrastructure, or offboard computation, OP-Follower operates exclusively on visual inputs and runs entirely on a Unitree Go2 equipped with a Jetson Orin NX. The proposed framework bridges the gap between low-frequency, delay-prone visual perception and safety-aware high-rate control by integrating YOLO-World-based open-vocabulary person detection, BoxMOT multi-object tracking, and Kalman filter-based delay-aware state prediction within a unified planning-control architecture that tightly couples image-based visual servoing with nonlinear model predictive control. A decoupled artificial potential field-based visual obstacle avoidance strategy is further employed to ensure collision-free navigation without interfering with target tracking objectives. Comprehensive evaluations in high-fidelity Gazebo simulations and real-world indoor and outdoor environments, including long-distance following, dynamic pursuit-evasion interactions, and multi-person scenarios, demonstrate robust real-time performance with fully onboard processing at a 50Hz control rate. Compared with a non-predictive baseline, OP-Follower reduces pixel-space and distance tracking errors by up to 57.2% and 50.5%, respectively, achieving representative root-mean-square errors of approximately 13-16 pixels and 0.09-0.11m under sharp turning motions, while maintaining stable outdoor following over trajectories exceeding 600m.

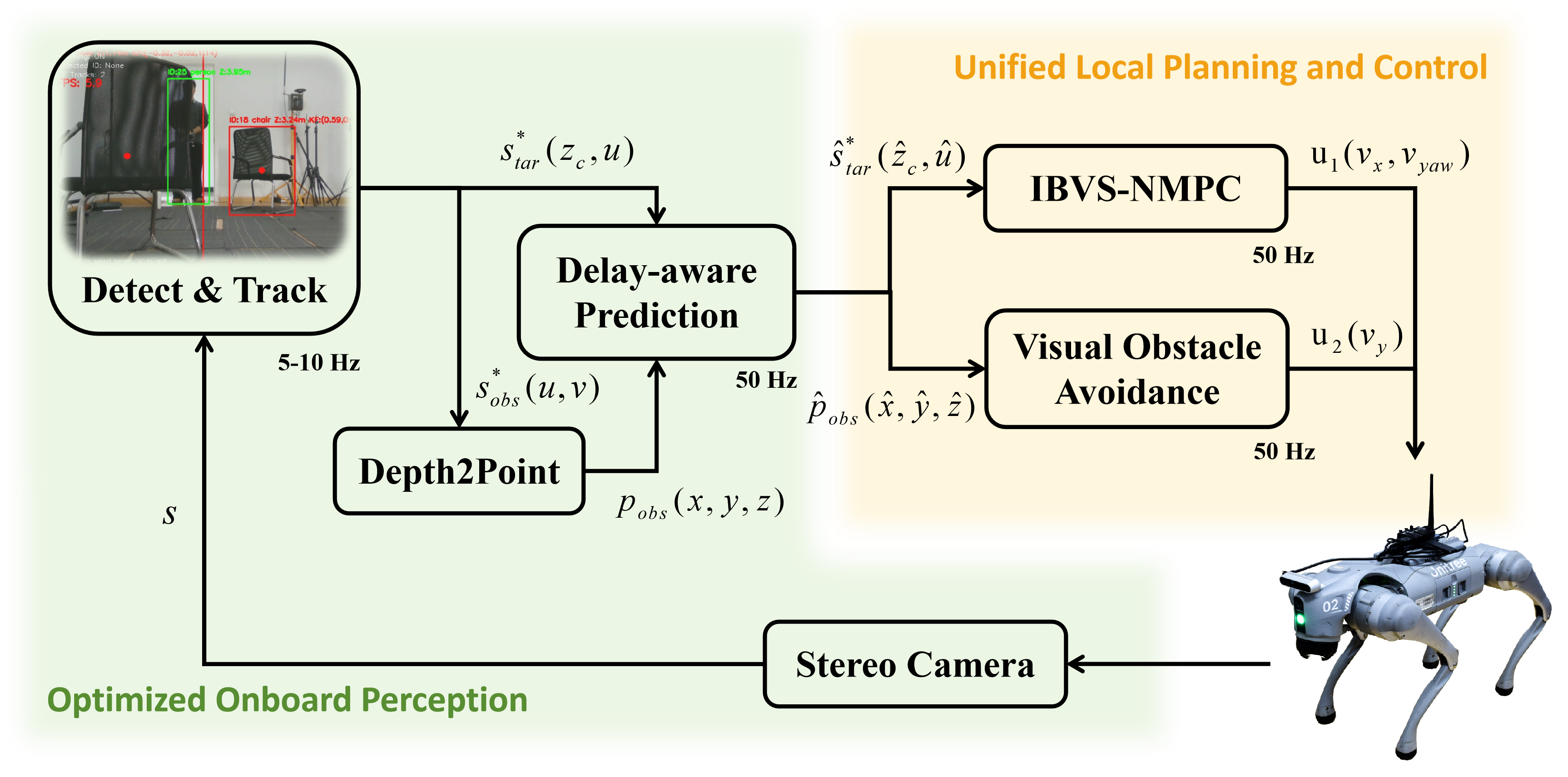

OP-Follower Framework

Overall architecture of the proposed OP-Follower framework, seamlessly integrating YOLO-World detection, BoxMOT tracking, KF prediction, IBVS–NMPC and APF obstacle avoidance.

Simulation Evaluation

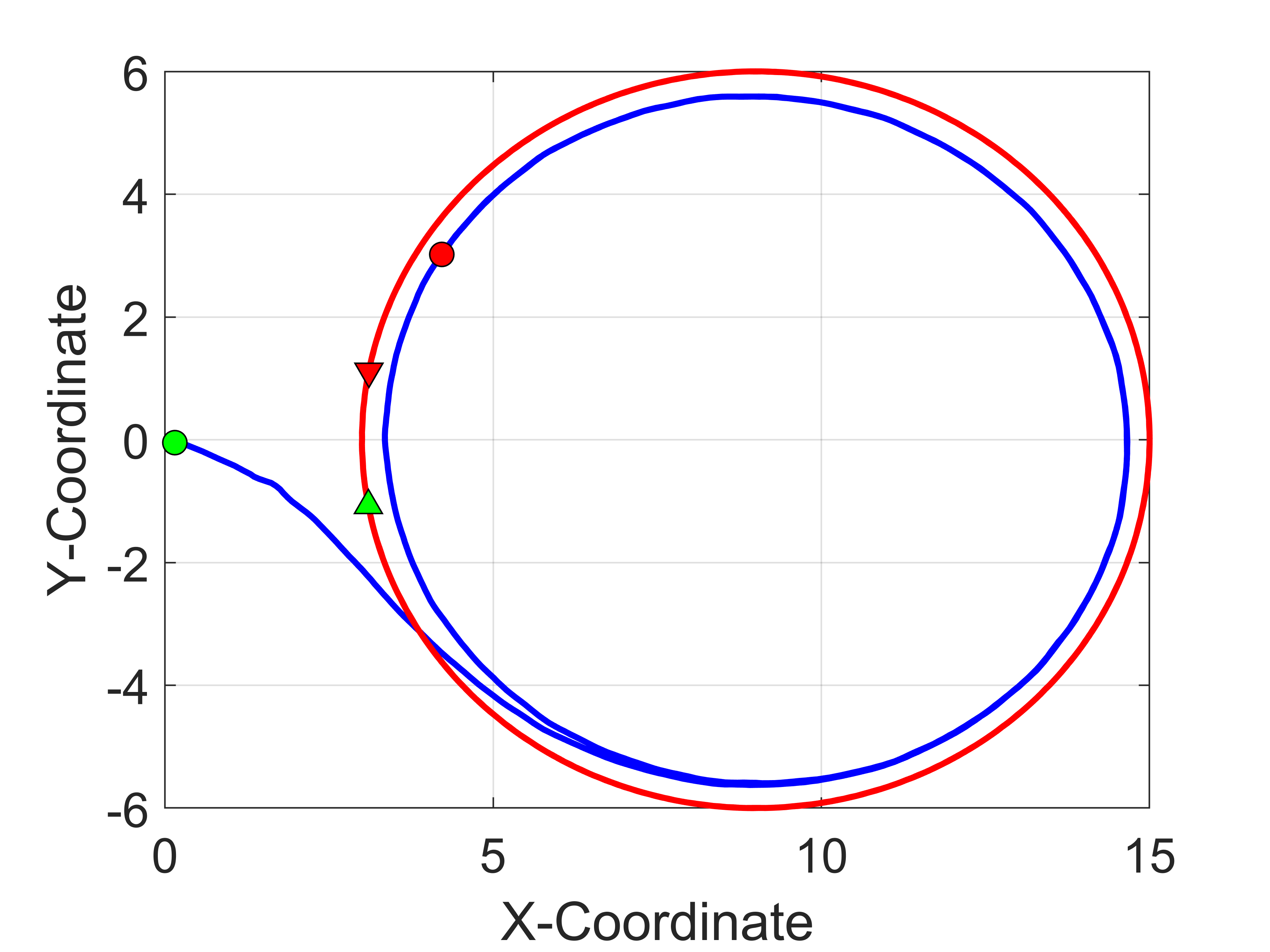

Robust Tracking Performance Across Diverse Trajectories

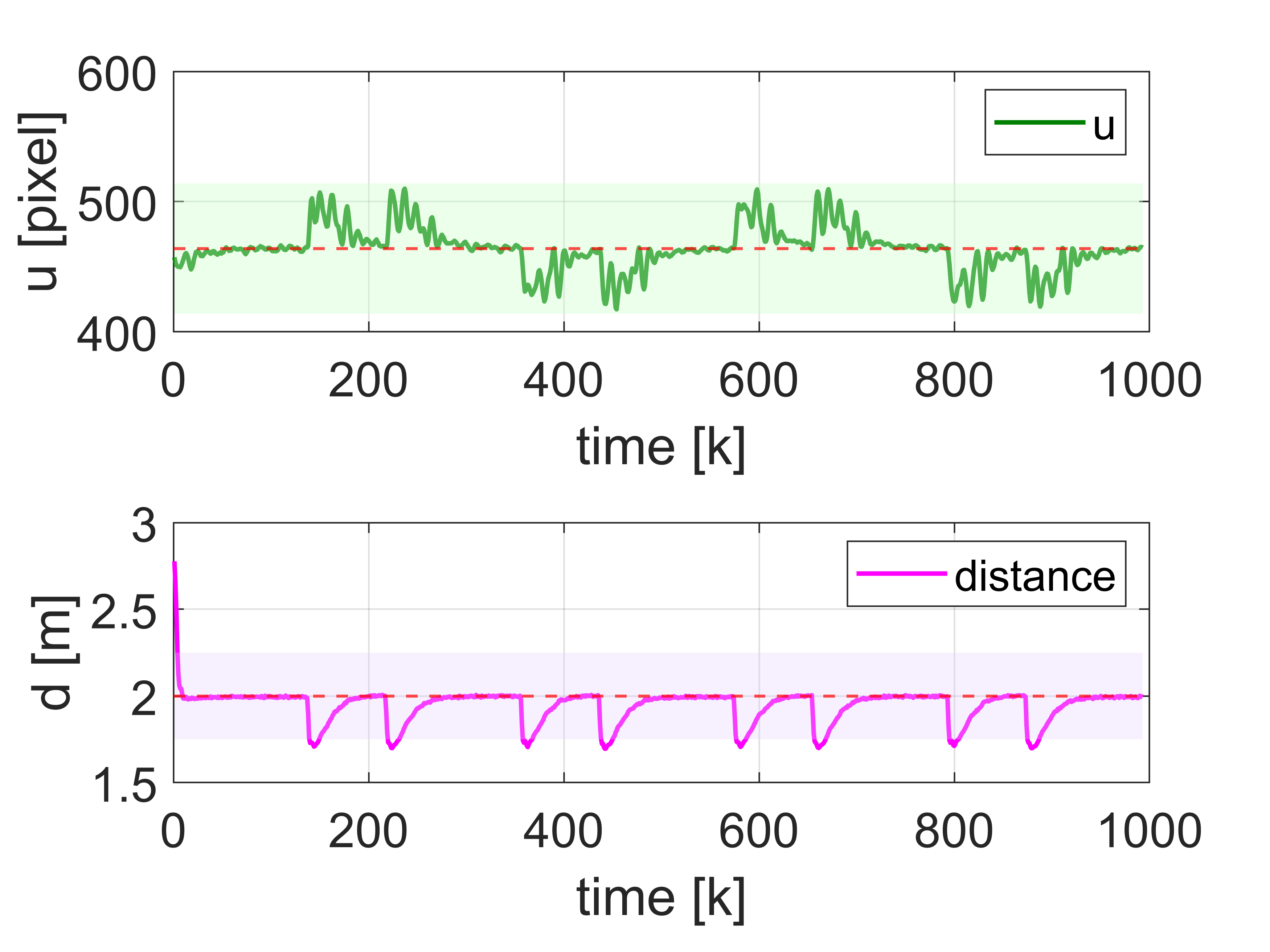

(a) Circle Traj.

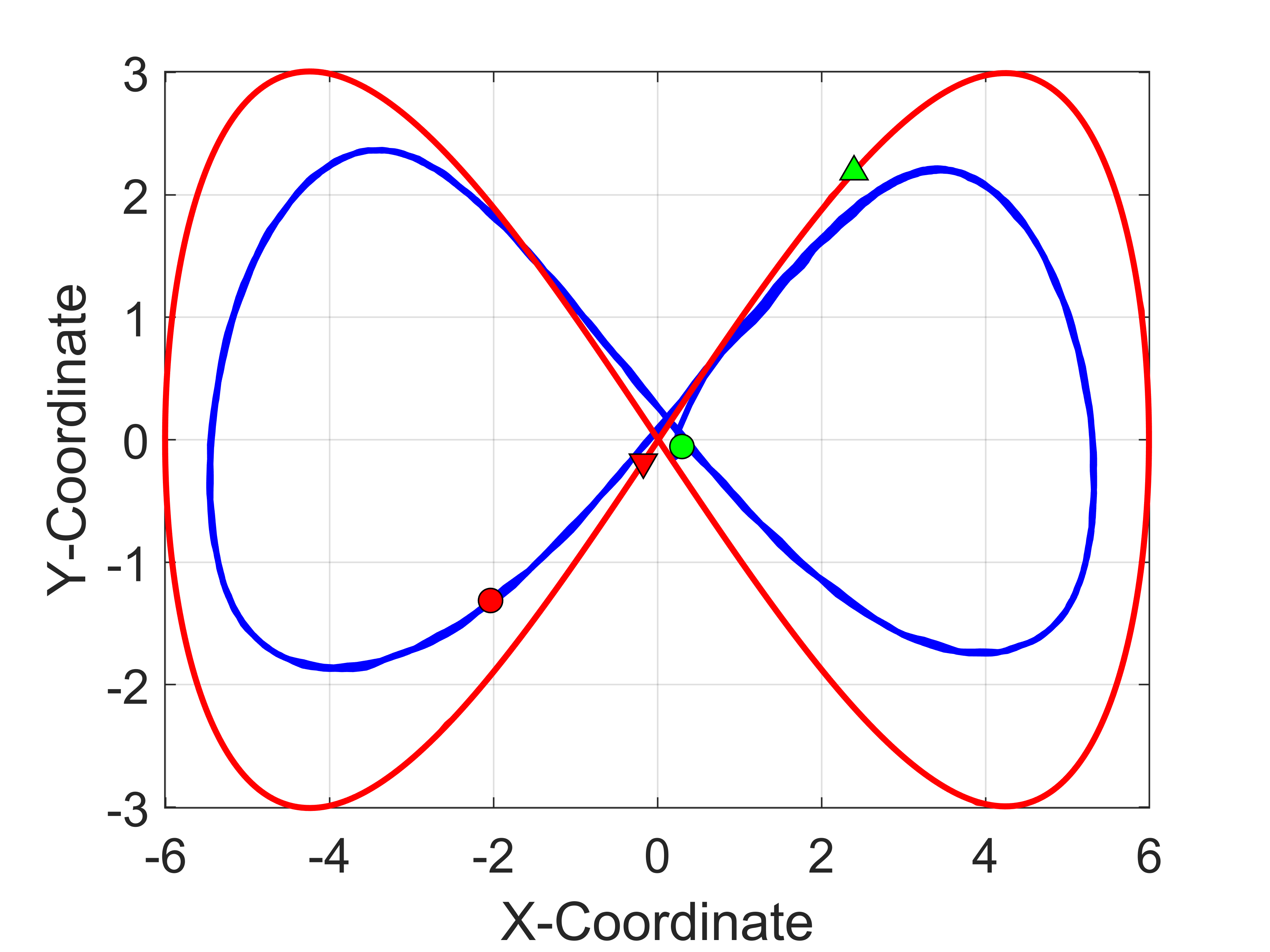

(b) Lemniscate Traj.

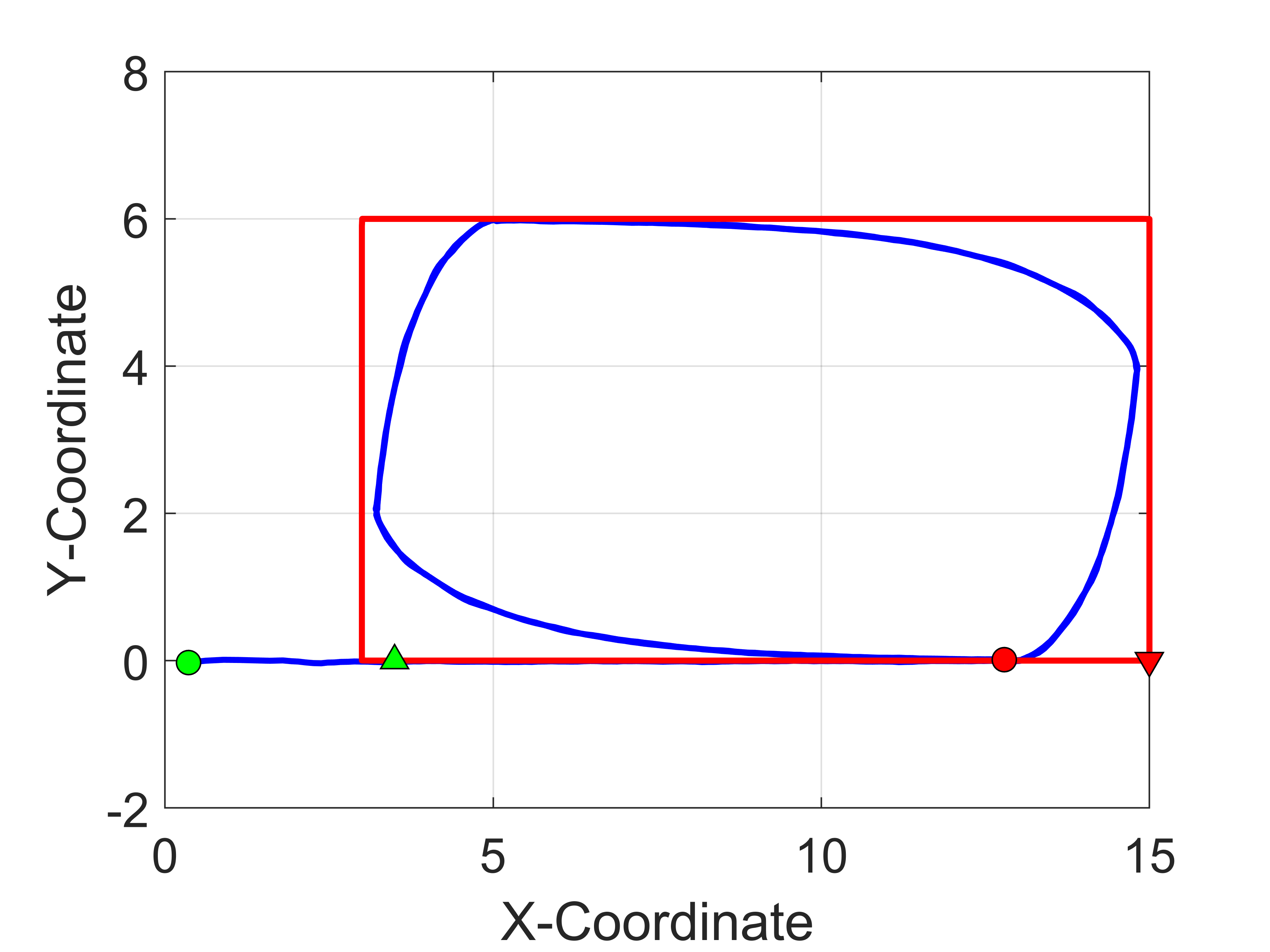

(c) Rectangle Traj.

(d) Double-Triangular Traj.

(e) Circle Met.

(f) Lemniscate Met.

(g) Rectangle Met.

(h) Double-Triangular Met.

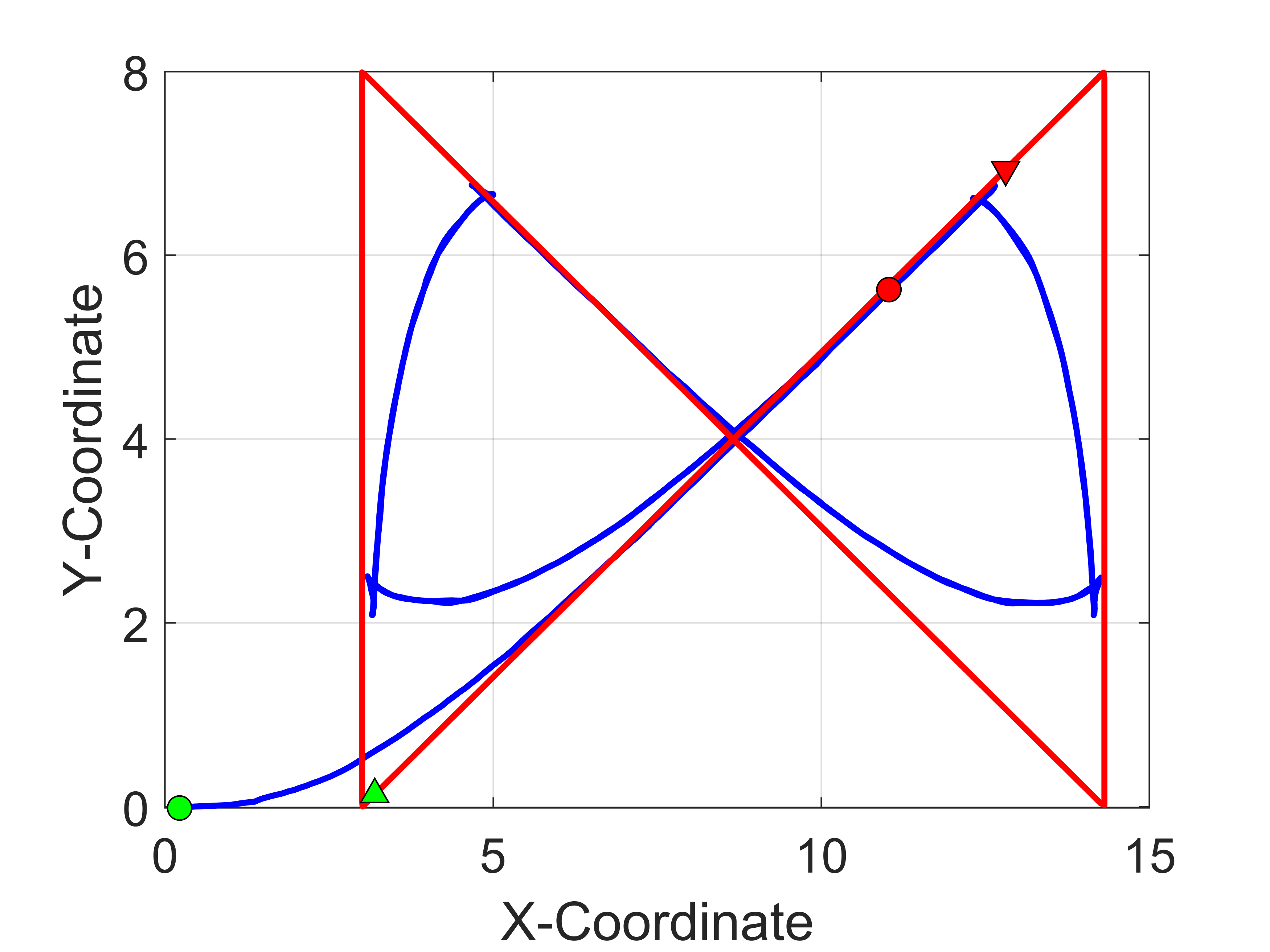

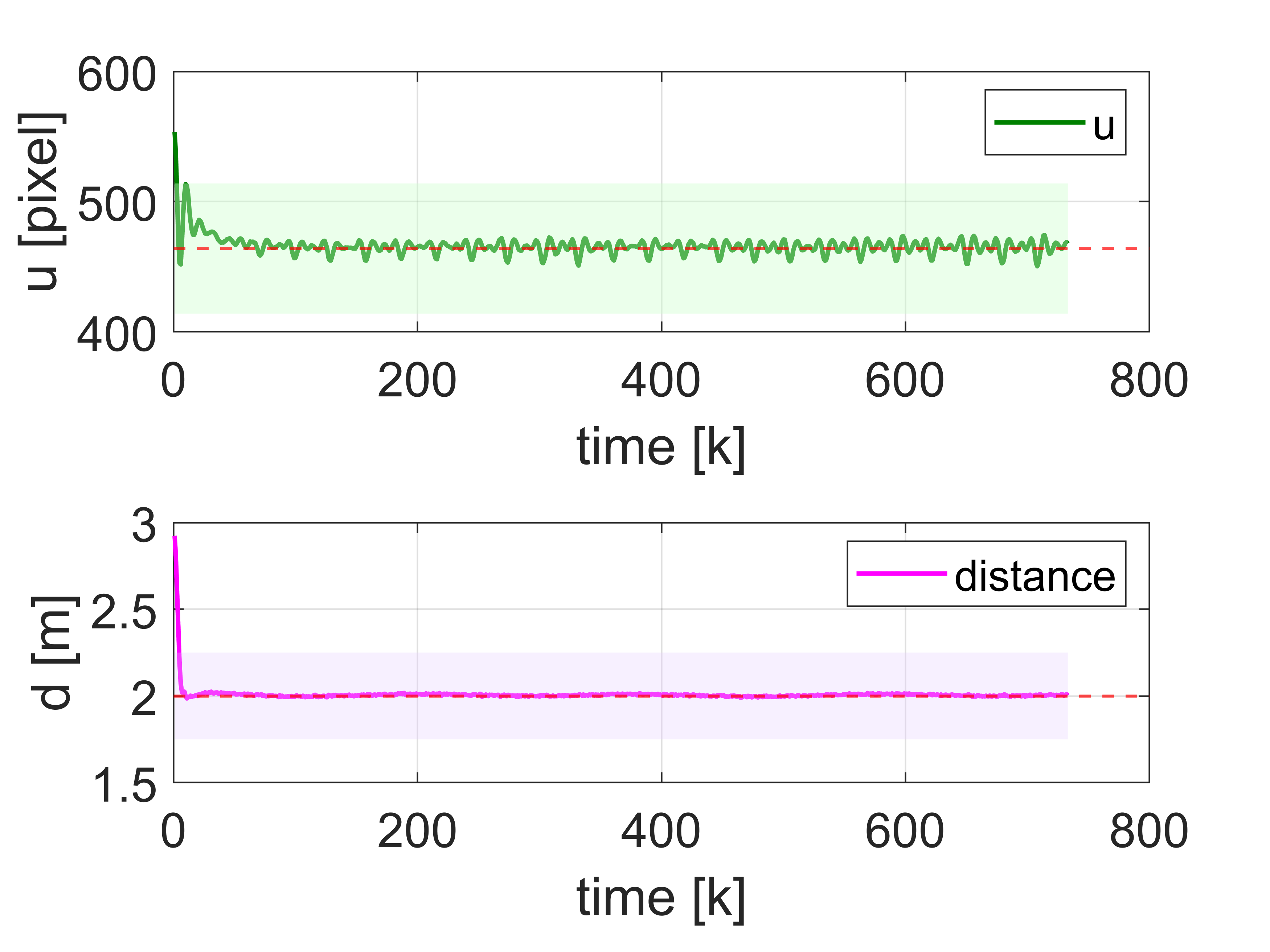

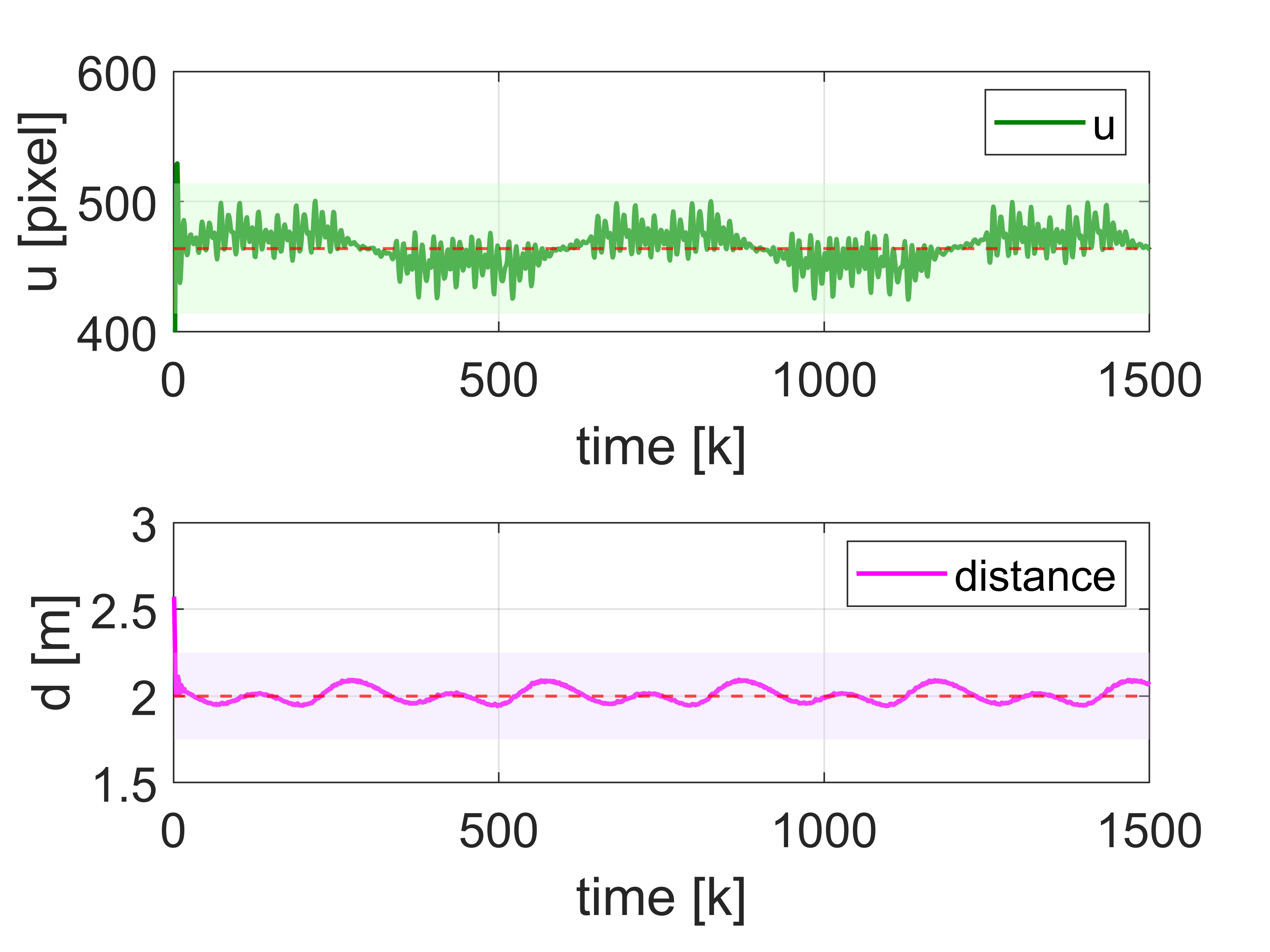

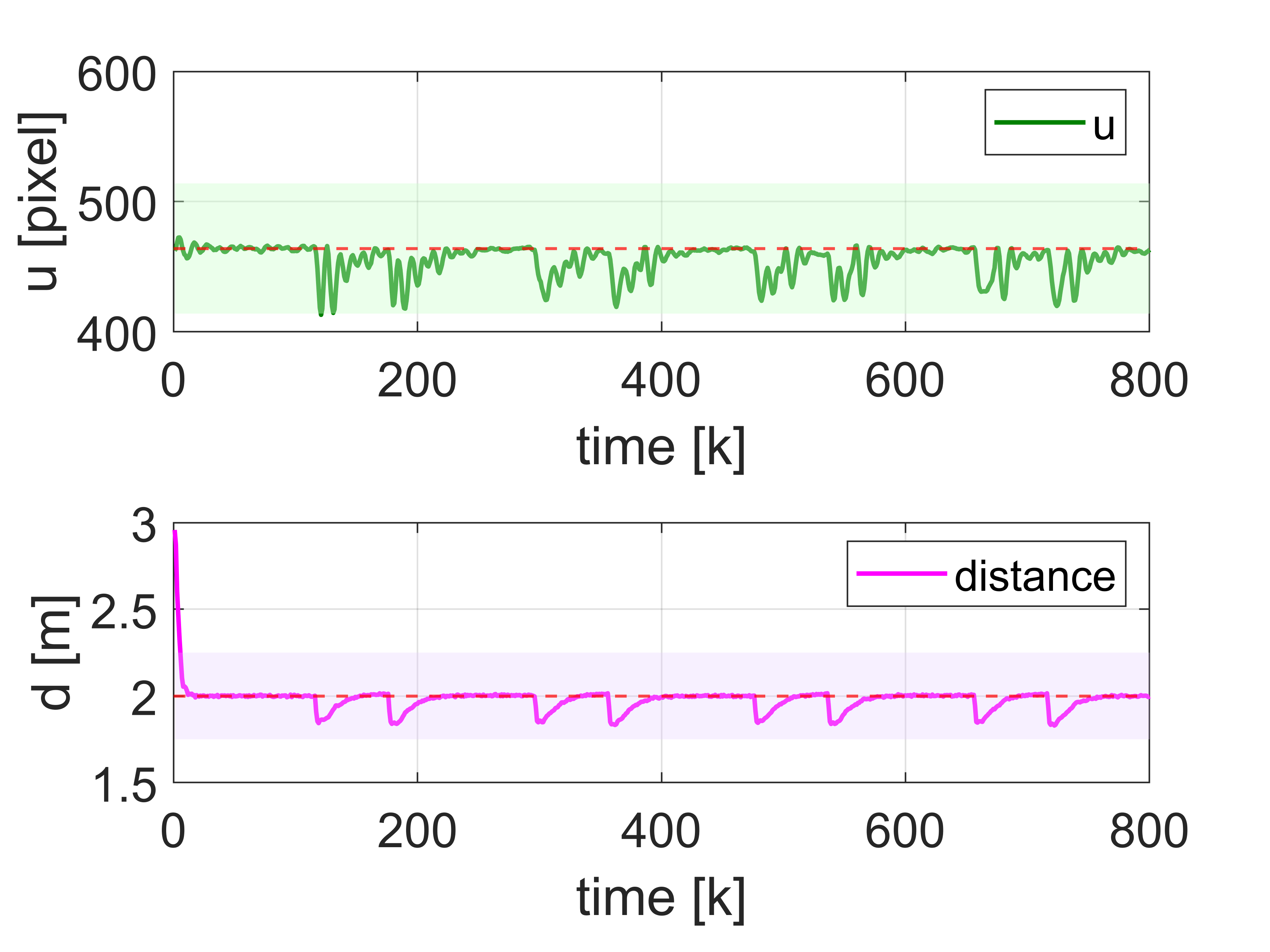

Simulation comparison of OP-Follower tracking performance under different trajectories. Subfigures (a)-(d) depict the trajectories of the target person (red line) and the quadruped robot (blue line), with green and red markers denoting their respective start and end points. subfigures (e)-(h) present the corresponding performance metrics, where the red dashed lines indicate the reference values: the image center at u-coordinate of 464, and the desired following distance of 2 m.

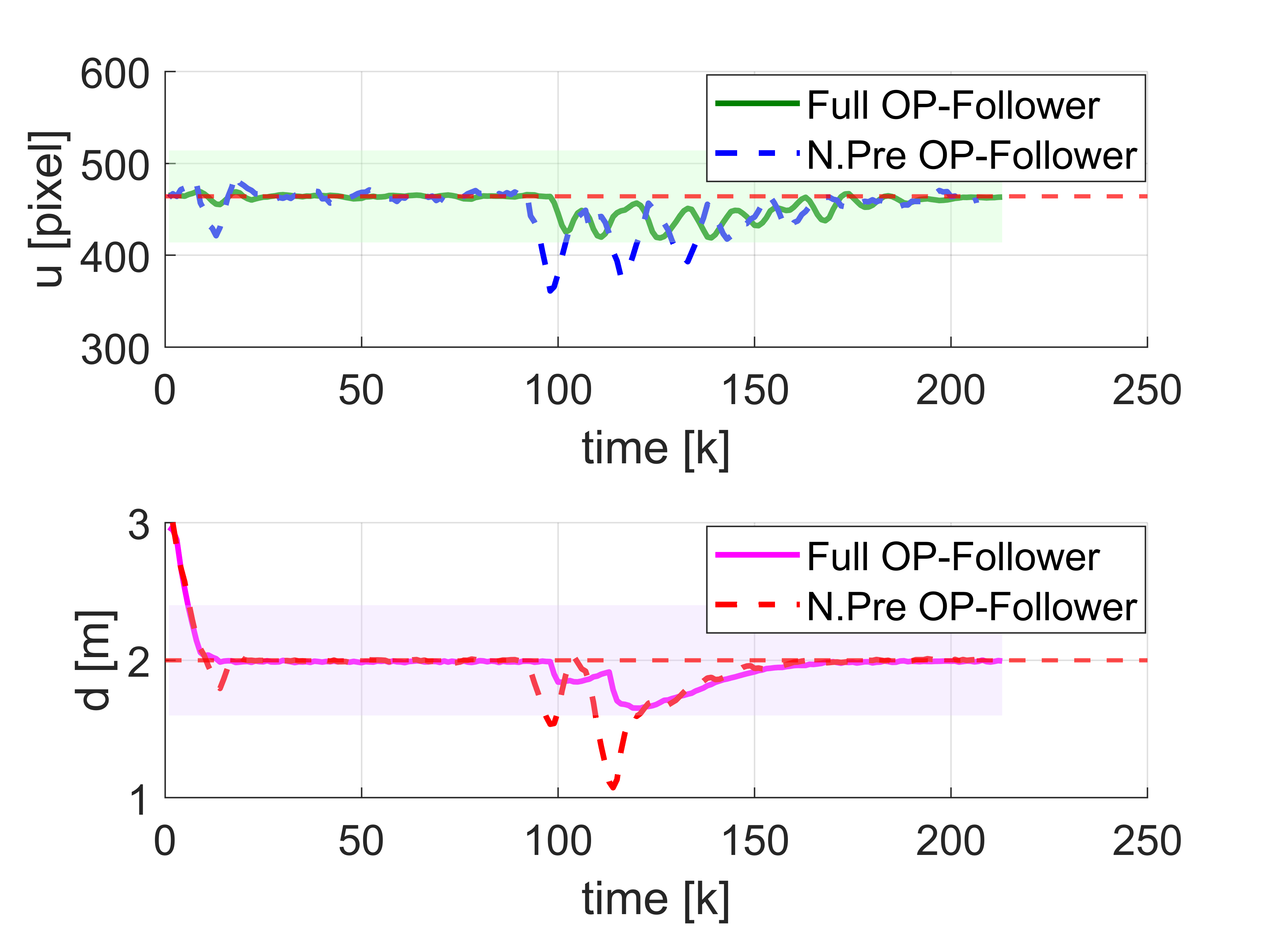

Predictive vs. Non-Predictive Control Comparisons

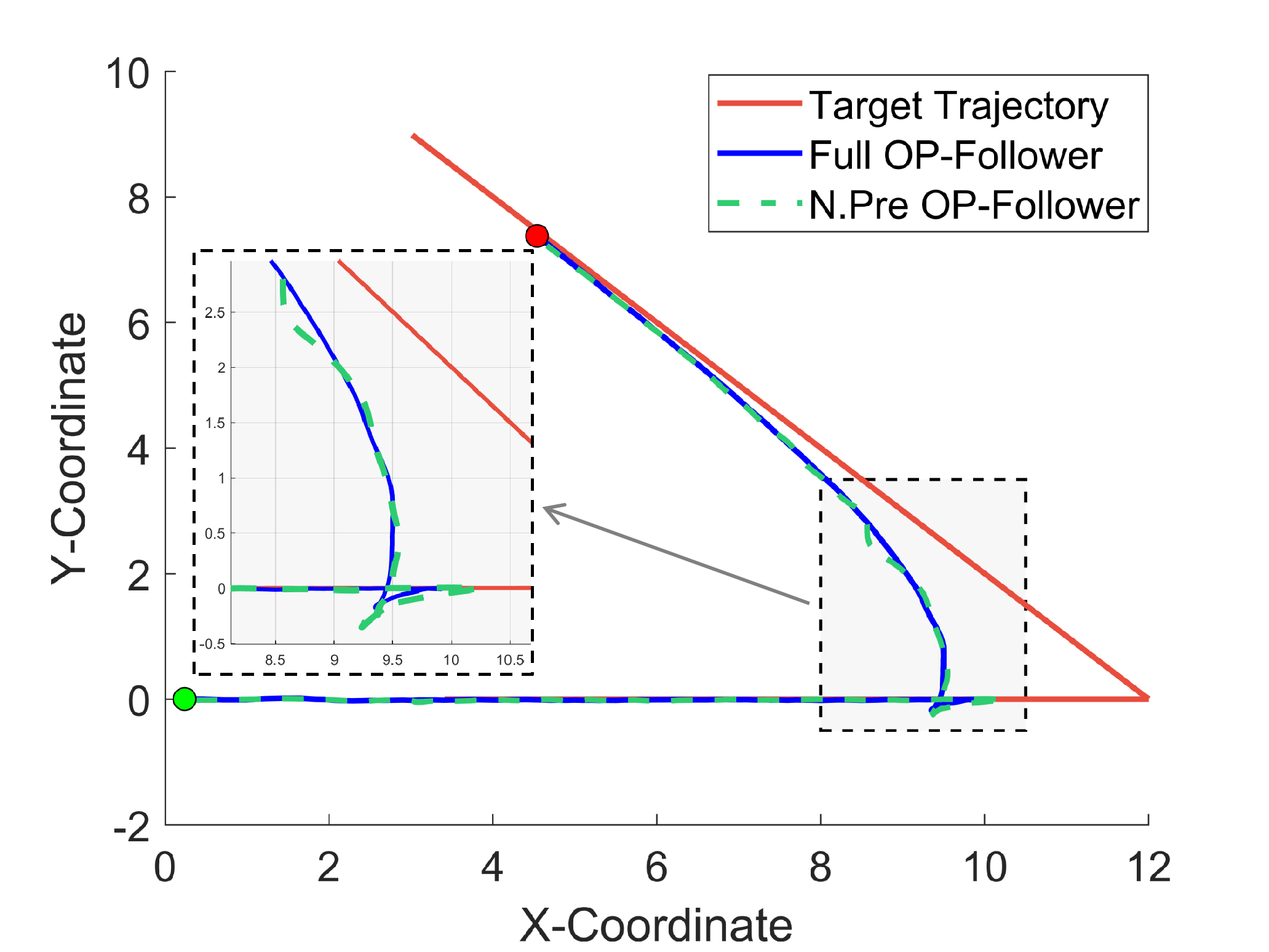

L-Turn(45°) Trajectories.

L-Turn(45°) Metrics.

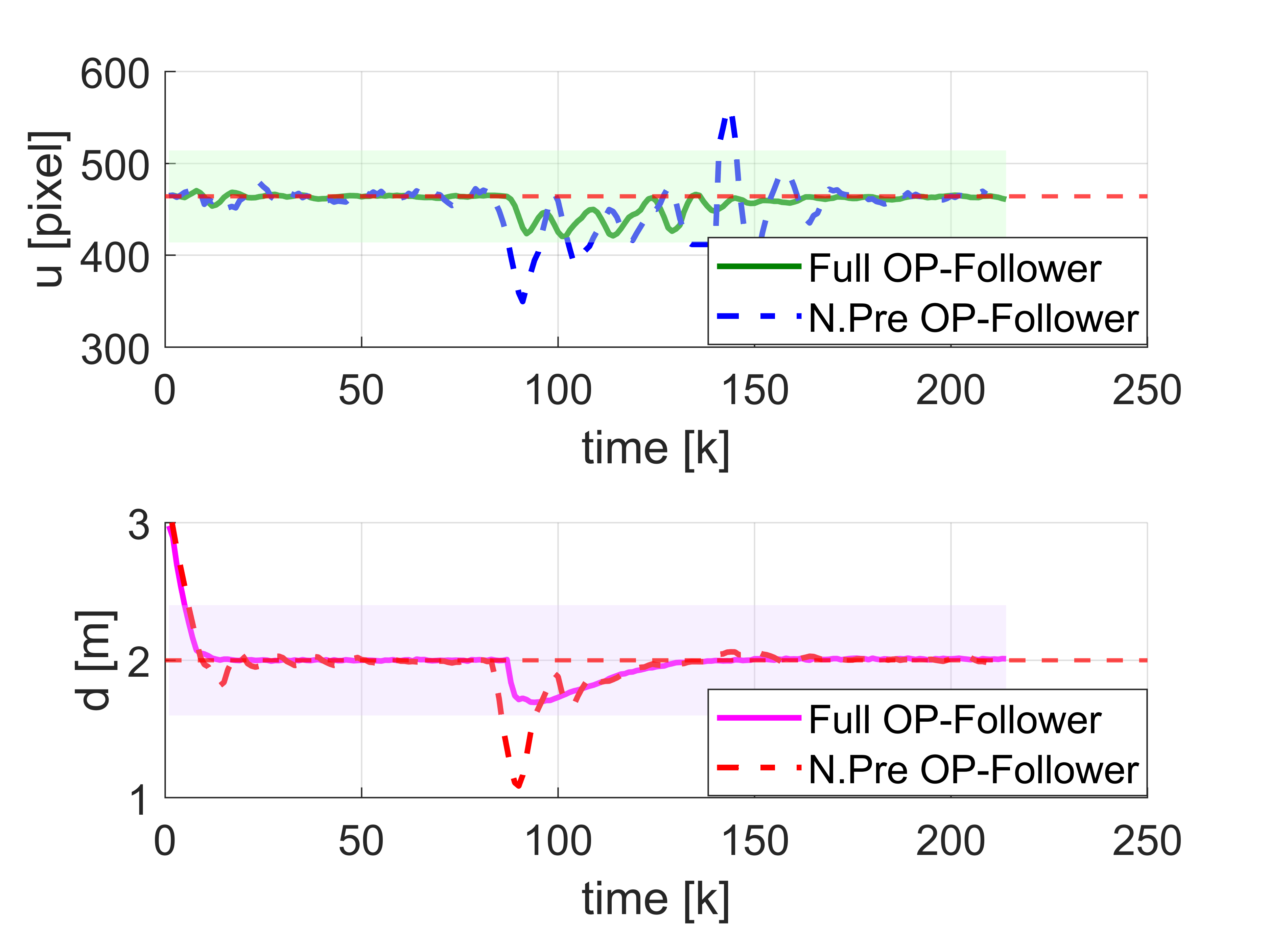

U-Turn Trajectories.

U-Turn Metrics.

Performance of the full OP-Follower against a non-predictive baseline.

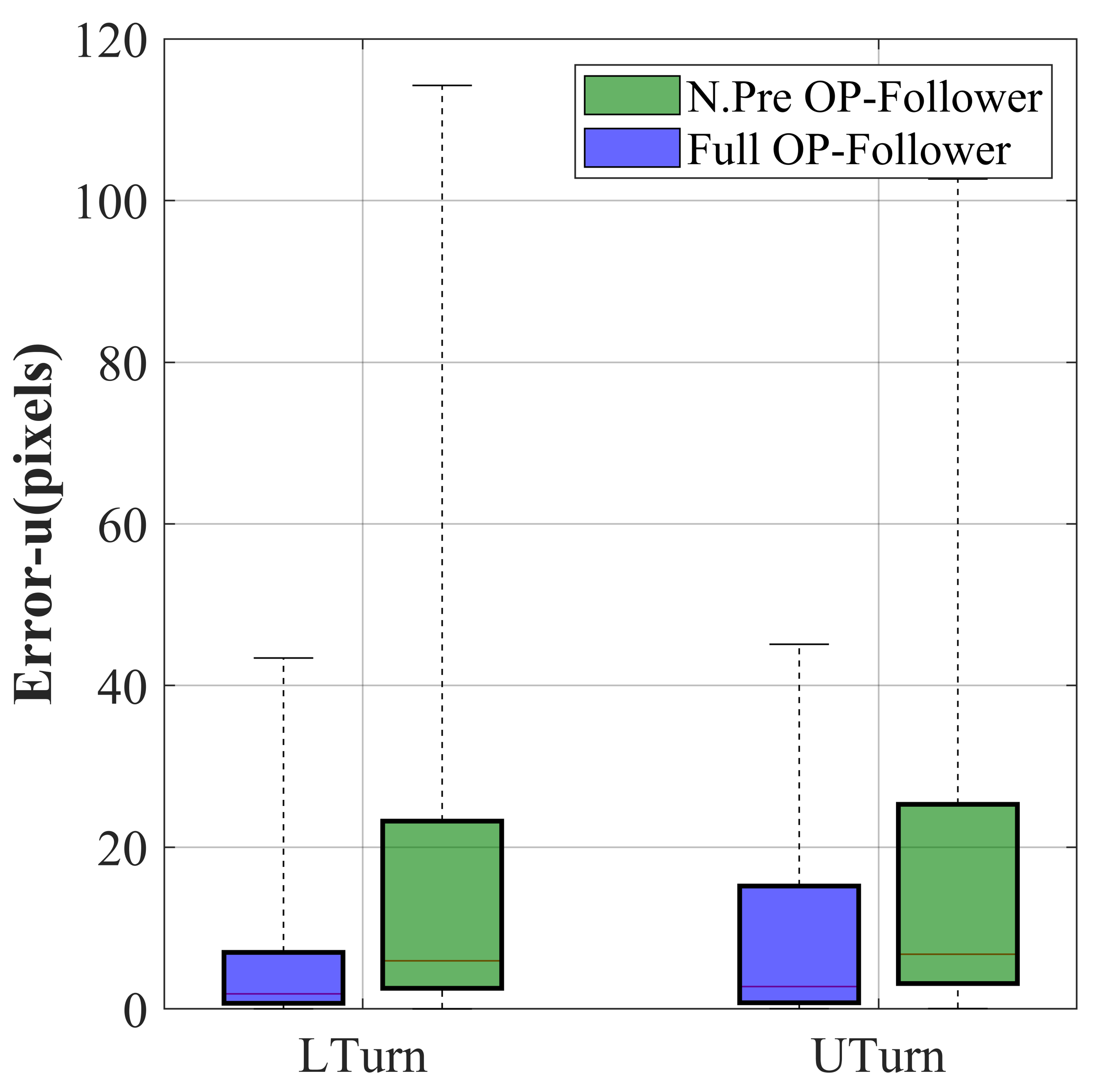

Pixel Error (u)

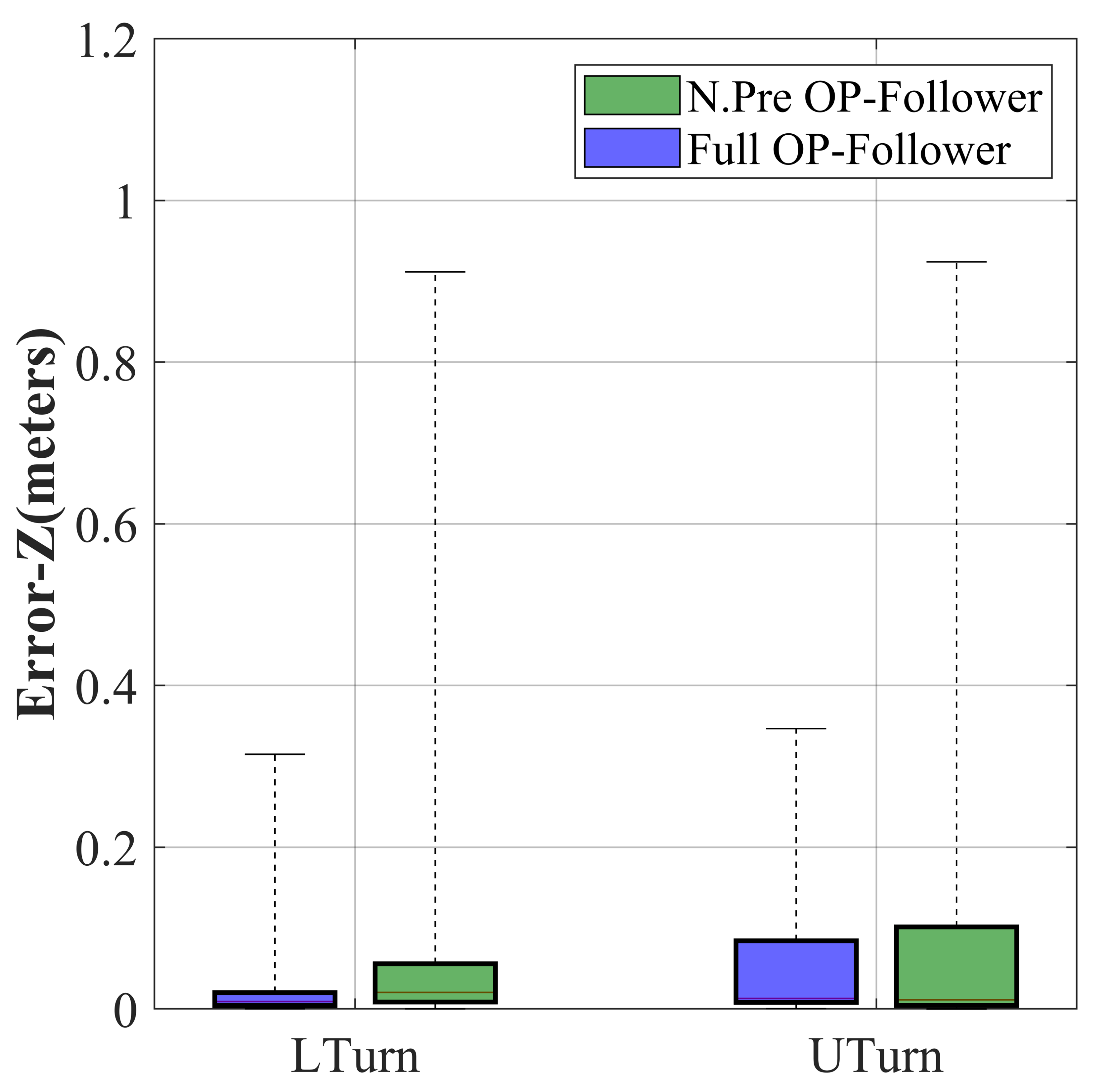

Distance Error (Zc)

Box Plots of Tracking Errors for Full and Non-Predictive OP-Follower.

| RMSE | u (pixels) | Zc (meters) | ||

|---|---|---|---|---|

| L-Turn | U-Turn | L-Turn | U-Turn | |

| N.Pre OP-Follower | 30.9611 | 27.6651 | 0.1888 | 0.1955 |

| Full OP-Follower | 13.2460 | 15.6693 | 0.0935 | 0.1126 |

Full OP-Follower demonstrates significant improvement over N.Pre OP-Follower across all metrics, with error reductions of 57.2% (L-Turn, u) and 43.4% (U-Turn, u) in pixel error, and 50.5% (L-Turn, Zc) and 42.4% (U-Turn, Zc) in depth error.

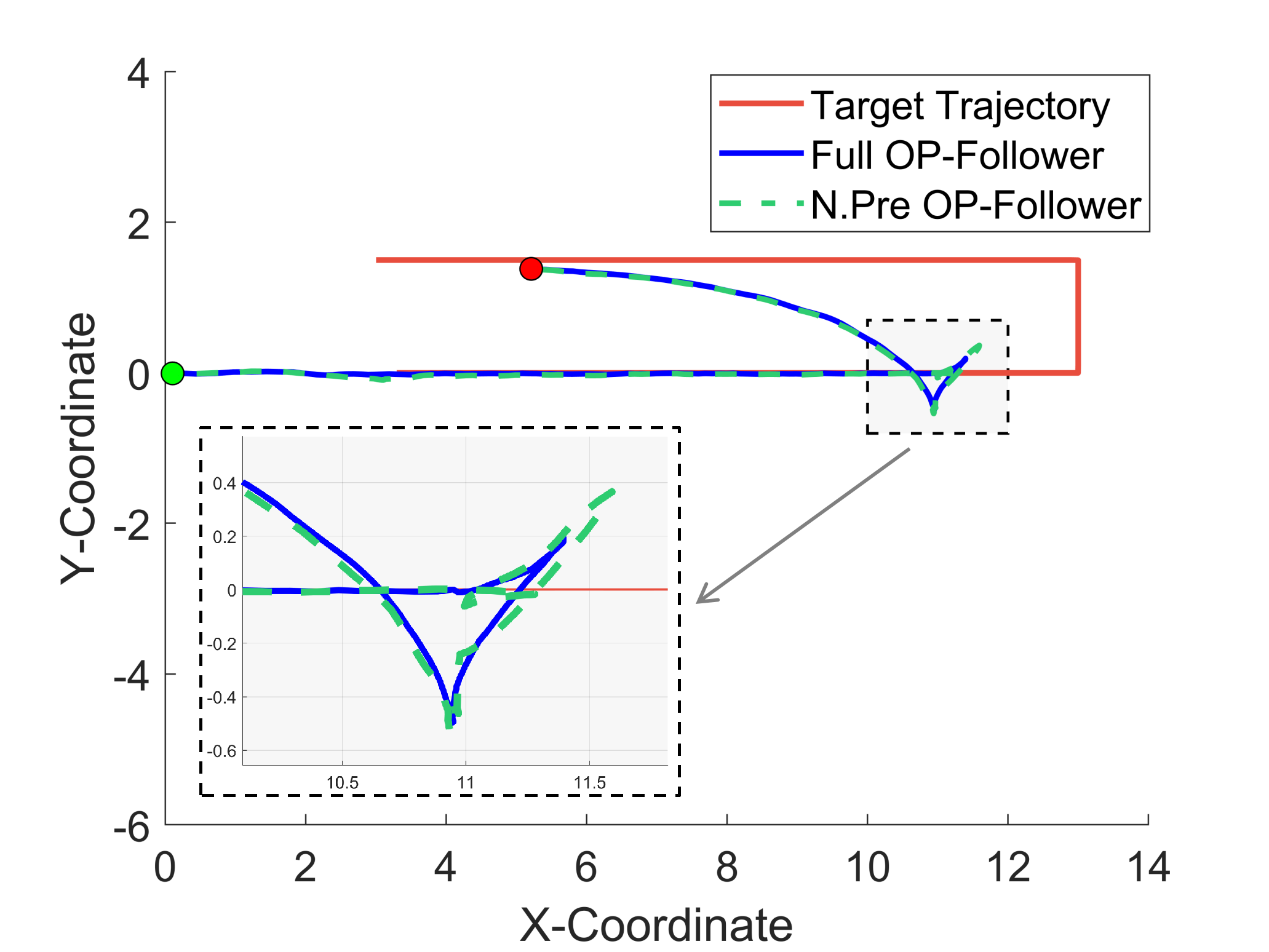

Real-world Experiments

Real-world qualitative results of OP-Follower. Scenarios are designed to validate algorithm robustness under dynamic conditions such as intermittent occlusions, varying lighting, and frequent human-robot interactions.

Long-Distance Following

Pursuit-Evasion Interaction

Visual Obstacle Avoidance

Multiperson Following